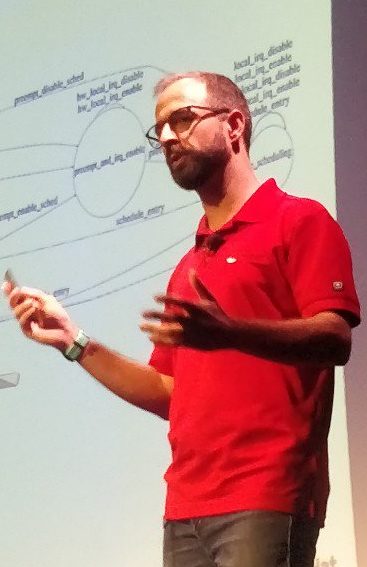

Formal modeling made easy — Daniel Bristot de Oliveira

Linux is becoming more and more critical, and yet is a quite complex system. We need more ways to show that the properties of the system are correct.

Daniel asks how do we check that our reasoning is right with regard to the behaviour of the system ? Usually, to answer these questions, computer scientists point to formal methods. But they can be hard to grok, or apply to big projects like Linux. But, there are examples of successes with formal methods: the memory model helped catch bugs for example.

Combining state machines in code with formal methods gives Automata. Automata are defined with a finite set of states and a finite set of events. They can be combined to create more complex systems.

Daniel shows an example with a simple open, close read and write use case. Where using a model found a deadlock bug. As part of his Phd, Daniel created the PREEMPT task model: it has 9017 states, and 23103 transitions. But, he didn’t do it all at once, he broke the problem in multiple parts. During the development, he found 3 bugs that could not be found with any other method.

He showed how to translate scheduling conditions from code, to a more formal representation of sufficient conditions: for example, it’s not possible to preempt when irqs are disabled. His work has been academically accepted: he published three papers on IRQs, then threads and their behaviour in PREEMPT_RT.

How to verify at runtime that the behavior of Linux is what we expect ? His methodology was to use tracing with perf with offline verification. Then he wrote validation tools that compared it with a model, and iterated over the model. Once the model was complete enough, he found bugs in the logical correctness of the Linux task model.

Daniel then moved from offline to runtime verification. He converted his model to C code that defined state and events to represent the automaton as struct and enums. His code was simple enough, that getting and verifying the state and transitions was O(1). The verification code runs as a kernel module, that can be silent or print everything in trace. When an error happens, the stacktrace is printed.

That’s how Daniel found a bug in the tracepoint handling of the Linux kernel.

Daniel says that his method is applicable to smaller models, that can be used as “test cases”: one would design a simple model for a part of the behavior of the Linux kernel. He benchmarked this with a simple preemption verification model, and found that in normal use case, impact was negligible. With benchmarks that have big kernel usage, the impact was much bigger, but still more efficient than just using tracing.

In conclusion, Daniel says it’s possible to model complex behavior of Linux. He says that automata is good first step to that. It can be used create big models from small ones. It’s even possible to verify the runtime behavior of Linux.

There’s still work to do model more parts of the Linux kernel, like locking as lockdep does, RCU or the scheduler.

Kernel documentation: past, present, and future — Jonathan Corbet

Documentation is a crucial aid for our users, Jonathan says. It’s also helpful for developers, first for understanding, then for fixing how things work.

About 90% of the kernel code is written by paid developers nowadays. But no one is paid to write kernel documentation. The kernel has a well defined tree hierarchy maintainer model. And it closely matches the source code filesystem hierarchy. But the Documentation/ directory does not match this model: everybody touches documentation. Which is probably for the best.

But in 2016, Jon found that there was 2000 .txt files in the kernel Documentation/ directory; there was about 34 Docbook files, and lots of Kerneldoc comments spread throughout the code. It had a fragile, complex and home-made build system; there was no markup in kerneldoc comments, which generated an ugly formatted output.

That’s when the kernel community, led by Jon and Mauro, decided to create something better. It needed to preserve the text-driven format. In Linux 4.8, DocBook was replaced by Sphinx, which uses restructuredText as format. Kerneldoc comments could now use RST formatting, and the DocBook build system was deleted.

There are now 3149 files in Documentation/, 2100+ of which are in rst. The majority of the documentation is now in .rst format. The kerneldoc comments in the source code now are formatted automatically, with links to functions documentation, and index is generated. It’s possible to generate PDF, EPUB formats. The incremental builds are now faster. And installing dependencies for documentation building is as simple as running a script.

There are still build warnings; for example when a function parameter isn’t documented. There aren’t being fixed as fast as possible. Jon says that there’s the need to convert the remaining .txt files as well. But one shouldn’t just convert files that have outdated information as-is. Obsolete documentation should be deleted.

While conversion to RST is easy, evaluating documentation relevance and correctness to match reality is much harder, and is what Jon is looking for contributors with.

When writing documentation, one should think about who are the readers, and what are their needs. Different readers have different needs, which is why Jonathan has been pushing for re-organization in “books”. The core-api has its own directory, user-api/ as well, the process/ as well that describes how to participate in kernel development. There’s a book for admins, for kernel developer tools, or the driver api.

There are still missing pieces, Jonathan says. There’s no guide for new maintainers, the rules are un-written. There’s no subsystem-specific guides for developers; each subsystem has its own workflow, rules for commit message, etc.

Jon also wants to improve or replace the scripts/kernel-doc perl program to parse kerneldoc. PDF generation depends on latex, which is big dependency. Sphinx stylesheets are still ugly according to Jon. There was work recently to autoconvert function() names to Sphinx links in 5.3, simplifying writing of documentation further.

Please write more documentation, Jonathan concludes.

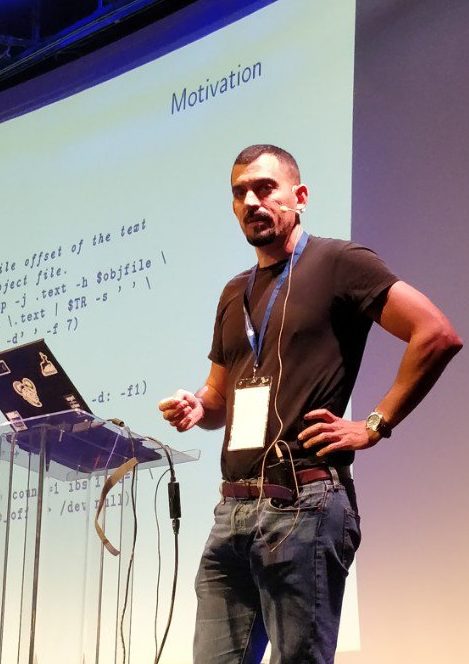

GNU poke, an extensible editor for structured binary data — Jose E Marchesi

As part of his work on the GNU toolchains, Jose sometimes need to edit object files, and he writes scripts on top of binutils to edit binary data which are hard to maintain.

So he decided to write a general purpose binary editor. But he didin’t want it to operate on bytes and offsets: he wanted to understand structured data. He start with C structure definitions, but this wasn’t enough because compilers can modify their memory representation, with padding for alignment for example. He looked at what existed, but didn’t find any fully satisfactory solution.

Jose gives a demo of poke: it’s a command line tool, with an interactive command line interface. It supports hexdumping, and a domain specific language (DSL) to define variables, as well as struct definitions. He loads a pre-defined set of definitions for the ELF structures, and start operating on a minimal ELF object.

The poke DSL can define functions to ease access to fields that depend on other fields for example. It’s possible to define arrays unbounded, or bounded either by number of elements or total size.

A specificity of the poke DSL is the way it handles offsets. It supports both bytes-oriented and bits-oriented with units values. A unit size can be defined in bits, bytes or kB. It’s also possible to define bitfields. And it can also use any defined types’ size as a unit size.

A powerful poke feature is the constraints. They define rules on a given type definition. And when combined with poke unions, it can be used to define which type will follow depending on a set of constraints.

Defined types can be mapped to files. Working on types defined in-memory and in mapped variables is exactly the same.

In conclusion, despite not having been a first release yet, it’s very powerful, and available for contributors.

RCU in 2019 — Joel Fernandes

Joel says he always wanted to understand RCU internals, so he jumped in, until it was to the point that he understood enough to help with his work and to contribute.

RCU started as a nice and simple algorithm, but it evolved over the years into a complex beast to be able to handle the real-world constraints of the Linux kernel.

To synchronize multiple reader and writers to a data structure, the basic synchronization primitive is the reader-writer lock. RCU is a synchronization primitive with the same goal. It works by having the writer wait for all CPUs that might have a reader to have passed through a quiescent state (no longer read): this is a grace period.

Since multiple readers can copy the pointer to the data, synchronize_rcu() waits for all the readers to have unlocked their copy: modification by the writers are then propagated. The grace period is always longer than the longest reader.

RCU is the fastest read-mostly synchronization primitive. But, writes are costly; so multiple writers can share a single grace period, and at the end of the grace period, the last write will propagate.

Joel shows the Toy RCU documented in WhatIsRCU.txt : it only works on non-preemptible kernels, but is very simple to understand.

Tree-RCU is the most complex and widely used flavor of RCU. It builds a tree of struct rcu_node, each having one rcu_data per CPU. Each CPU reports a quiescent state(QS) to its rcu node; and then the root node gets propagated updates, until grace period (GP) is reached. When a CPU is idle, its state is automatically reported as quiescent (QS) by the grace period thread.

Reaching the grace period raises softirq that calls the callback that wakes up the threads that called synchronize_rcu.

In Linux, there are two types of quiescent states (QS): light weight and heavy weight. Only the latter can end the grace period(GP), but only once the former was passed through.

At around 100ms after the GP has started, rcu code starts interacting with the scheduler to ask it to reschedule tasks that might be blocking, setting need_resched. After 200ms, it asks even strongly with cond_resched(). At 300ms for NOHZ_FULL CPUs, it sends an IPI to CPUs that are still holding the end of grace period. After 1 second, the task’s need_qs flag is set. If a task holds a CPU for to long, the GP can’t end and it’s an RCU stall.

There has been work recently to consolidate the various types of RCU flavours in the kernel. All the flavours now share a GP, and the state machines are consolidated.

That’s it for this edition of Kernel Recipes. Congratulations for reading all of this live blog !

Trackbacks/Pingbacks